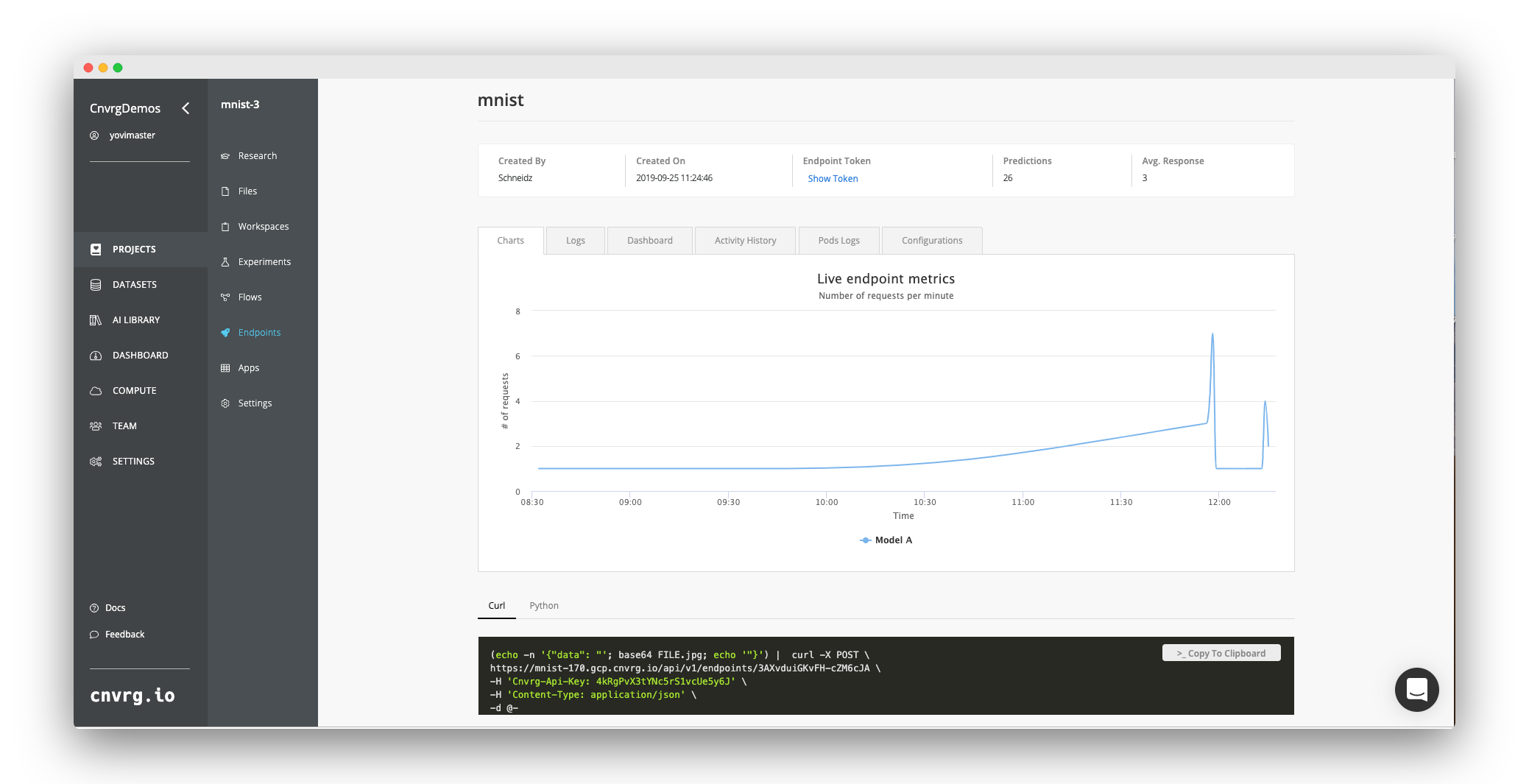

Monitor your machine learning models performance while in production

Once a model is deployed, you have a responsibility to ensure its reliability and performance in production. That means that in addition to system monitoring, you should be checking and monitoring its ML health and vitals such as accuracy, bias, and variance as new data comes in. In this online workshop we’ll discuss how to build a system to monitor your machine learning model in production on Kubernetes. You’ll learn to keep track of different models and their model performance over time, and how to set up custom alerts for your models. We’ll discuss what types of variants to monitor, and how to measure its performance. Join CTO of cnvrg.io, Leah Kolben in this hands-on workshop on critical practices for monitoring your machine learning models in production. Using the power of Kubernetes, we’ll build a complete system for model tracking that ensures high performing models in production.

What you’ll learn:

- Why we monitor models in production

- The critical vitals to track and monitor performance

- How to set up automated alerts

- How to set up Kubernetes for monitoring

- Use tools like Grafana and Kibana to monitor and visualize your system and ML health

.png)